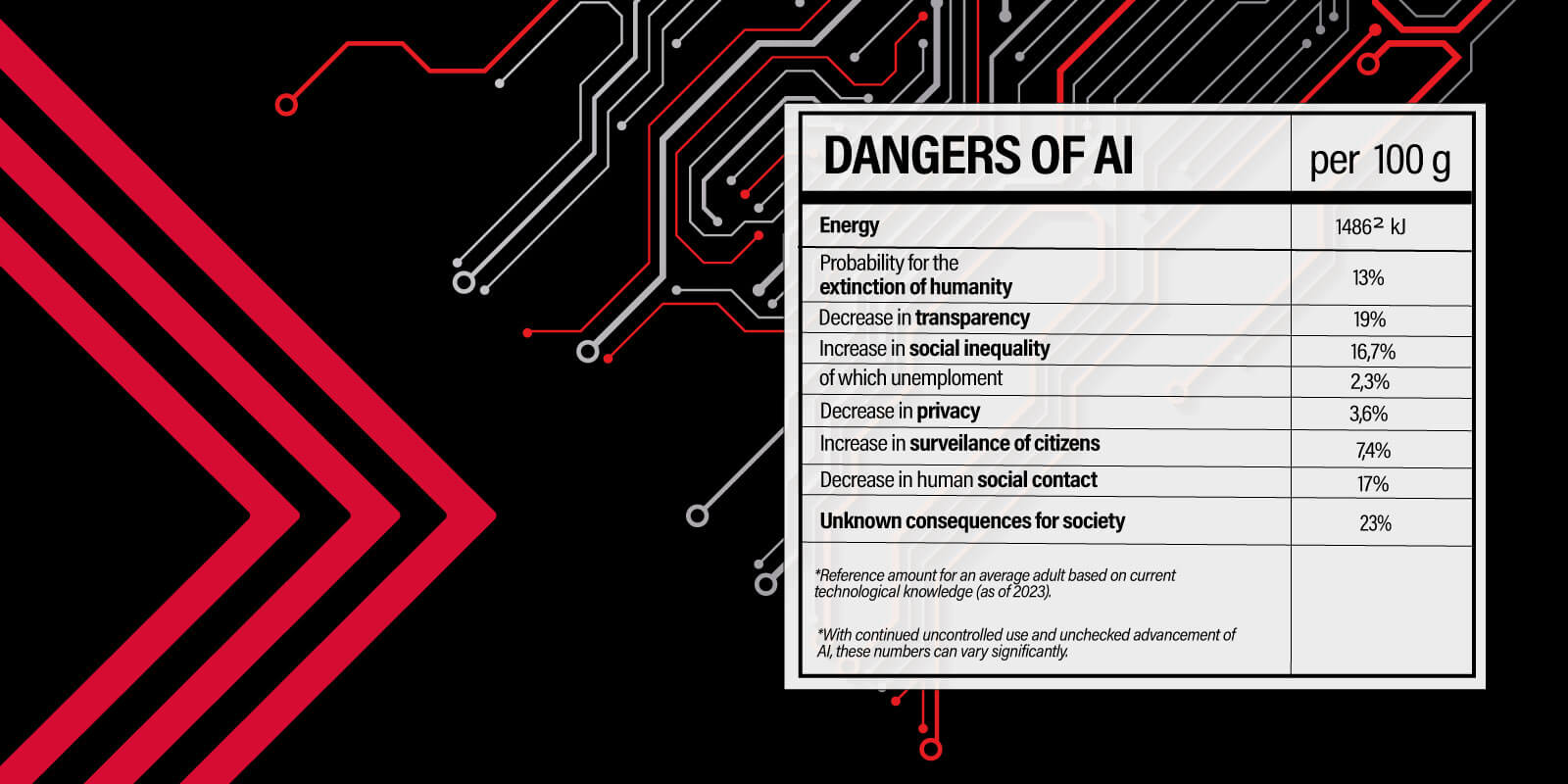

Have we become so mesmerized by technology that we’re completely blind to the potential threats it poses, both to individuals as well as society at large? Jay Krihak, Executive Director Crossmedia New York, urgently calls for controls being put into place if we don’t want to fall victim to the dire consequences of unchecked AI.

Listen to this article – read by an AI:

Prior to computers, all industry was visible. People could see the rudders, sails, bolts and gears. It was easy to see how the parts of a machine made it work. Transparency was as easy as opening the car hood to look at the engine or looking up at an advertising billboard. And how things worked was documented by regulators. Even the secret formula of Coca-Cola – kept under lock and key from competitors – was approved by the FDA to prove it was safe for humans. Fast-forward to the fifth industrial age of AI, when technology is viewed as more magic than machinery. In today’s digital world of sites, apps and games, there’s no FDA review of algorithms or required testing to monitor societal impact.

The lock and key is often owned by one person, and the secret algorithmic formula is kept in a black box, never to be seen by anyone not named Mark, Sundar, Jeff or Elon. People tend to fear what they don’t understand. However, the opposite has happened recently as humans have become too trusting of technology and the people that create it. Look at the meteoric rise of OpenAI’s ChatGPT adoption and the cottage industry blooming on top of its API. To paraphrase Jeff Goldblum’s famous line in Jurassic Park, it appears that we’re building tech because we can – without thinking about if we should. That sentiment was also echoed recently by the likes of OpenAI CEO Sam Altman and Geoffrey Hinton, the “Godfather of AI,” who quit Google recently so he could sound the alarm about the existential perils of AI.

Social media has taught us a lesson

With the ongoing, real-time experiment called social media, we have evidence-based proof that unchecked technology can have significant personal and societal consequences. History proves that black-box algorithms aimed to optimize business metrics will have severe, harmful consequences that are often not predicted.

- Mental Health: Facebook access at colleges has led to a 7% increase in severe depression and a 20% increase in anxiety disorder.*

- Image Health: Instagram research has shown that usage of the platform leads to higher risk of eating disorders, depression, lower self-esteem, appearance anxiety and body dissatisfaction among young girls and women, while simultaneously increasing their desire for thinness and interest in cosmetic surgery.**

- Misinformation Spread: The heightened spread of misinformation from international and domestic sources threatens everything from national health (vaccines) to presidential elections.

The god complex of CEOs and companies preaching that their way is the best, most righteous and only path forward for AI is what got us algorithms that power vitriol, hate and misinformation in the name of increasing engagement. What happens when humans give super-powered AI a few goals with guidance to achieve it and zero guardrails applied or oversight to pull it back? Unchecked AI is not an option.

With so much at stake, we can’t end up with another toothless “ad choices” set of self-regulation policies. We have to act now with the solutions available to us. First, it’s essential to legally require a certain level of transparency for oversight. We need laws in place for human and corporate accountability, especially when it comes to social media platforms. For our industry, the Network Advertising Initiative (NAI) is an example of an industry body established to ensure compliant collection and use of personal and sensitive data. When it comes to AI, we need a more advanced oversight body to audit and govern industry use. And companies that use AI must be beholden to it.

It’s also worth restricting what areas of industry AI can infiltrate until it becomes trustworthy. Health care, both physical and mental, is the clearest example of an area where misinformation can have lethal consequences. We must test the impact of AI in health care and elsewhere before releasing it into the public.

Augmentation, not authority

We desperately need a North Star for AI in advertising – one that safeguards more than just advertising and its practitioners. Here’s one suggestion: AI must only be used to remove friction in workflows and to augment and empower human creativity. However, even then, we must only use AI with proper consent, credit and compensation given to the owners of the IP that trains the models. And we must not take the results as fact without significant human intervention to establish proper trust and transparency. If we can agree on these principles, then maybe our industry’s incentives can be aligned for a change.

This article was first published on AdExchanger.

*https://mitsloan.mit.edu/ideas-made-to-matter/study-social-media-use-linked-to-decline-mental-health

**https://www.forbes.com/sites/kimelsesser/2021/10/05/heres-how-instagram-harms-young-women-according-to-research/?sh=5dc26f72255a

What Do You Think?